Link validation

Describes how to track broken links for a website using the link validator scheduled job.

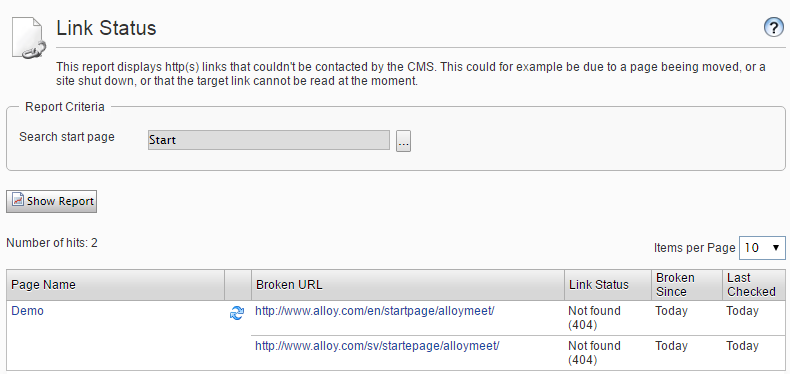

In Optimizely Content Management System (CMS), you can track broken links for a website using the link validator scheduled job. The Link Validator scheduled job checks the links in tblContentSoftLink, performs a head request against each one, and saves the status of the links back to tblContentSoftLink. The result of the validation job is available as a report called Link Status report in the Report Center.

The scheduled job first gets a batch of up-to-1000 links from tblContentSoftLink. The job returns only unchecked links or links that it checked earlier than when it started. The job uses the date it last checked the link and the recheck interval to determine if it should check the link again.

The job checks each of the links in the batch using a head request if the servers' robots.txt allows for this. No host is checked more than once every five seconds. If a link exists on a host that was checked in the last five seconds, the job waits five seconds and then checks the link.

The job saves the status of the link and the date it checked the link, and includes the HTTP status code, if possible, to tblContentSoftLink. The job saves information about when it finds a link broken. After it checks the first batch of links, it fetches a batch from the database.

The job continues until it cannot get any more unchecked links from the database or the job's runtime has exceeded the value set in maximumRunTime. The job stops if it finds many consecutive errors on external links or if there is a general network problem with the server running the site.

Configure the Link Validator

None of the settings are required, but you can use them to customize the behavior of the link validation job. Add the <linkValidator> node as a child to the <episerver> node of the web.config file. Example:

<linkValidator externalLinkErrorThreshold="10"

maximumRunTime="4:00:00"

recheckInterval="30.00:00:00"

userAgent="EPiServer LinkValidator"

proxyAddress="http://myproxy.mysite.com"

proxyUser="myUserName"

proxyPassword="secretPassword"

proxyDomain=".mysite.com"

internalLinkValidation="Api">

<excludePatterns>

<add regex=".*doc"/>

<add regex=".*pdf"/>

</excludePatterns>

</linkValidator>To configure the behavior of the link validation job, you have the following options:

-

externalLinkErrorThreshold– The job aborts if there are more consecutive errors on external links than the configured value. -

maximumRunTime– The maximum time the scheduled job executes. -

recheckInterval– A link that was validated as working is not rechecked until the configured time has elapsed. -

userAgent– The user agent string to use when validating a link. -

proxyAddress– Web proxy address for the link checker to use when validating links. -

proxyUser– Web proxy user for authenticating proxy connection. -

proxyPassword– Web proxy password to authenticate the proxy connection. -

proxyDomain– Web proxy domain to authenticate the proxy connection. -

internalLinkValidation. How the link validator handles internal links. Possible values: -

Off – Internal links are ignored.

- Api – Validates that the referenced page exists with the internal API. [default]

- Request – Internal links are the same as external links, using a head request.

-

excludePatterns– A list of patterns for links that the link validation job skips. Use the regex attribute to identify what links to skip.

Known limitations

The link validator does not handle private resources except pages, including documents and images stored on a local file system, which does not allow anonymous access. If you use forms authentication, the link validator does not validate links, and links do not appear in the link report. If you use basic or Windows authentication, links to these resources result in 401 (access denied) in the link report, such as the case for an intranet site with Windows authentication and anonymous access disabled.

Updated 6 months ago