Allowlist

How to configure allowlisting in Optimizely Feature Experimentation.

Allowlisting is one of the easiest ways to QA a flag rule. It lets you run your experiment in any environment and shows a specific variation to up to 50 users that you have selected. When the SDK makes an experiment decision for these users, they bypass audience targeting and traffic allocation to see the chosen variation. Users not on the allowlist must pass audience targeting and traffic allocation to see the live experiment and variations.

For example, imagine creating an experiment that compares Variation A and Variation B. You want to test the experiment's live behavior and show the variations to a select number of key stakeholders. You can create an allowlist that includes the User IDs for the stakeholders who see the live experiment.

To ensure that only your allowlisted users can see the experiment, create an audience targeted to an attribute no user will have or set the experiment's traffic allocation to 0%. After completing quality testing, establish your production settings for audience targeting and traffic allocation.

Note

Optimizely Feature Experimentation allows you to allowlist up to 50 users per experiment.

Allowlists exist in your datafile in the forcedVariations field. You do not need to do anything differently in the SDK; if you have set up an allowlist, the Decide call will force the variation output based on your provided allowlist. Allowlisting overrides audience targeting and traffic allocation. Allowlisting does not work if the experiment is not running, but you can set an experiment to 0% traffic or start in a staging environment to test with allowlisting.

Note

The

forcedVariationsfield in the datafile is only related to allowlisted variations. It is not associated with the legacy Full Stack forced bucketing, which uses theGet Forced VariationorSet Forced Variationmethods. Refer to the legacy documentation Use forced bucketing for more.

User allowlisting evaluates before these user bucketing methods:

Important

If there is a conflict over how a user should be bucketed, then the first user-bucketing method to be evaluated overrides any conflicting method. For more information, view the End-to-end Bucketing Workflow on How bucketing works.

When to use allowlisting

Use allowlisting for previewing, experimenting, and QAing a small group of users:

- As a developer, you can use allowlisting to mock a datafile and test a feature flag or flag variable you are implementing.

- As a QA engineer, you could get added to an allowlist to perform manual tests of feature flags and flag variables in a web UI, or you can add a test runner's 'user ID' to an allowlist to help automate these tests

- You can use allowlisting in a mock datafile. Then copy that datafile and use it as a part of your unit or integration test suite rather than the actual datafile.

When not to use an allowlist

Allowlisting should not be used for large groups of users. Optimizely limits you to a maximum of 50 allowlisted users per experiment because:

- Forcing variations with a large number of User IDs will bias your experiment results.

- Allowlisting increases the size of the datafile. The smaller the datafile, the more performant the page will be.

To target an experiment to a larger group of users for QA such as:

- All employees in your organization.

- A users on a staging environment.

- Any amount of users over 50 use an audience instead. Create an attribute that every user in the group will share, and target the experiment to an audience that contains that attribute.

Create an allowlist

You can create an allowlist of up to 50 users for an A/B test rule or Multi-armed bandit optimization rule. You cannot create an allowlist on a targeted delivery rule.

-

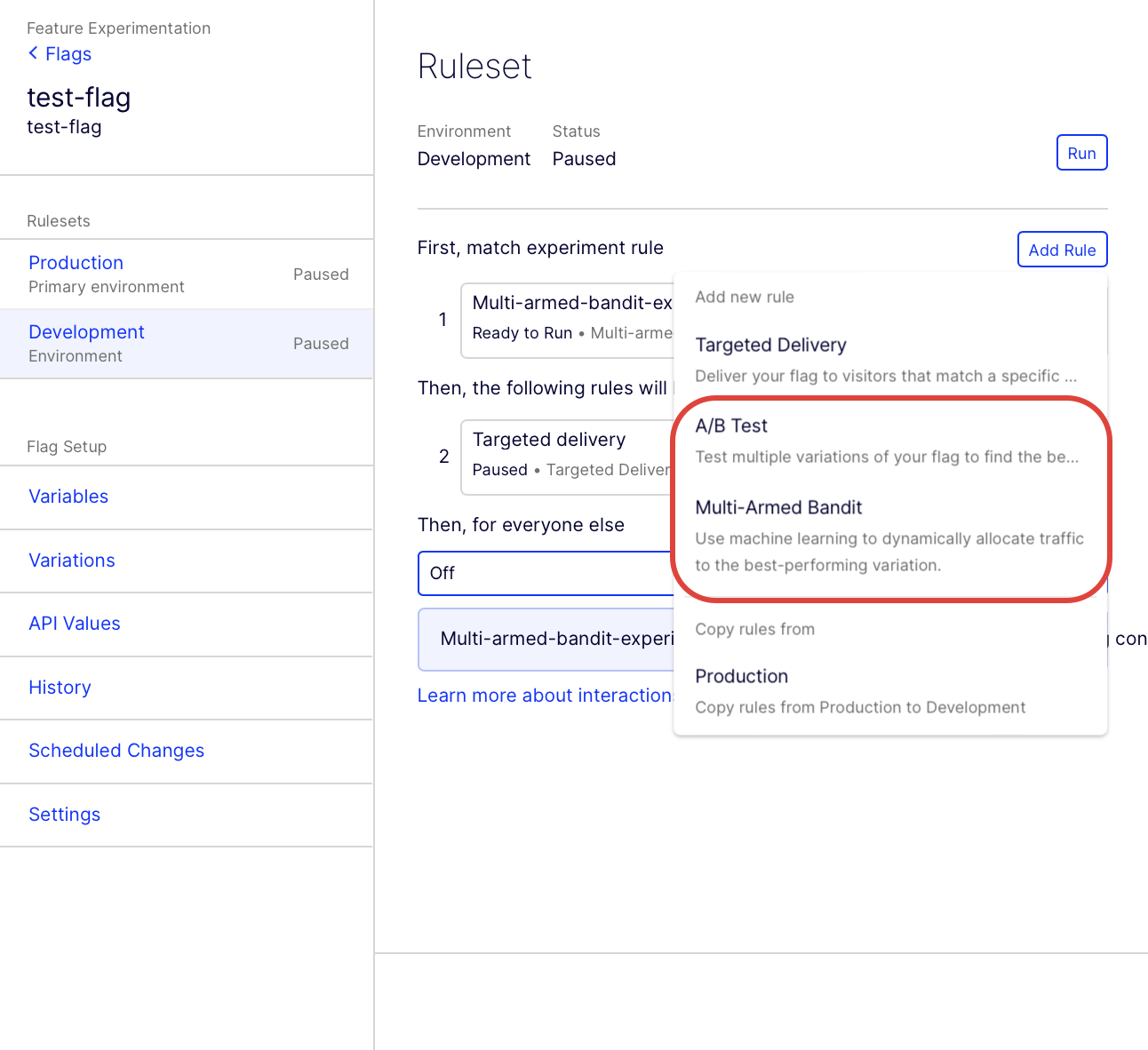

Go to the Flags dashboard and select the flag you have already created or click Create Flag to create one.

-

In the flag, click Add Rule and select A/B Test or Multi-Armed Bandit:

-

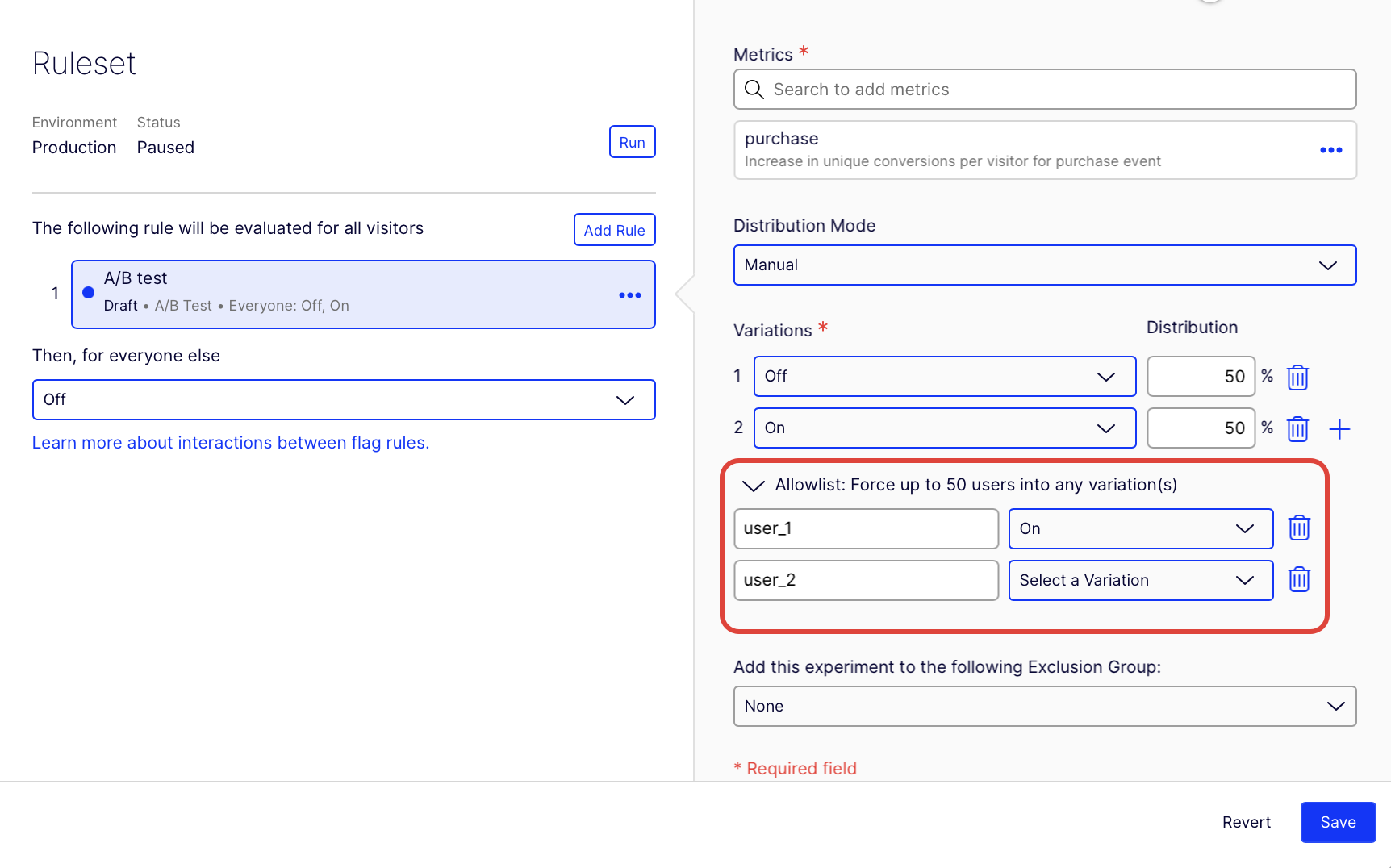

Expand the Allowlist: Force up to 50 users into any variation(s) section. Type the User ID and select the variation within the experiment you want to force the user into.

-

(Optional) Click delete to remove the allowlisted user. Click add to add another user.

-

Click Save.

Important

The User IDs used in the allowlist must match the User IDs passed through the Feature Experimentation SDK. Otherwise, allowlisting will not work. These User IDs are often anonymous and cryptic (for example, a cookie value), and you have to copy and paste them. See Handle user ids.

Updated about 2 months ago